Loading questions...

Updated

Want a break from the ads?

Become a Supporter and enjoy a completely ad-free experience, plus unlock Learn Mode, Exam Mode, AstroTutor AI, and more.

Create a free account to unlock all questions for this exam.

Log In / Sign UpNote: This question is part of a series of questions that use the same scenario. For your convenience, the scenario is repeated in each question. Each question presents a different goal and answer choices, but the text of the scenario is exactly the same in each question in this series.

You are planning a big data infrastructure by using an Apache Spark cluster in Azure HDInsight. The cluster has 24 processor cores and 512 GB of memory.

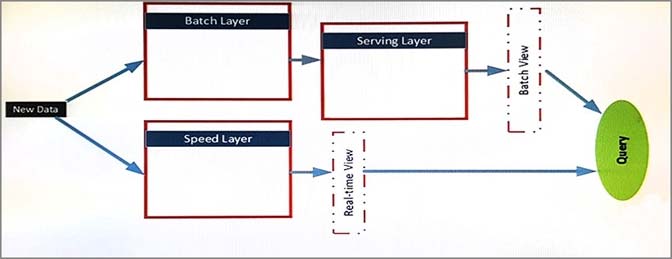

The architecture of the infrastructure is shown in the exhibit. (Click the Exhibit button.)

The architecture will be used by the following users:

✑ Support analysts who run applications that will use REST to submit Spark jobs.

✑ Business analysts who use JDBC and ODBC client applications from a real-time view. The business analysts run monitoring queries to access aggregate results for 15 minutes. The results will be referenced by subsequent queries.

✑ Data analysts who publish notebooks drawn from batch layer, serving layer, and speed layer queries. All of the notebooks must support native interpreters for data sources that are batch processed. The serving layer queries are written in Apache Hive and must support multiple sessions. Unique GUIDs are used across the data sources, which allow the data analysts to use Spark SQL.

The data sources in the batch layer share a common storage container. The following data sources are used:

✑ Hive for sales data

✑ Apache HBase for operations data

✑ HBase for logistics data by using a single region server

You need to ensure that the analysts can query the logistics data by using JDBC APIs and SQL APIs.

Which technology should you implement?

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question in this sections, you will NOT be able to return to it. As a result, these questions will not appear in the review screen.

You are building a security tracking solution in Apache Kafka to parse security logs. The security logs record an entry each time a user attempts to access an application. Each log entry contains the IP address used to make the attempt and the country from which the attempt originated.

You need to receive notifications when an IP address from outside of the United States is used to access the application.

Solution: Create two new brokers. Create a file import process to send messages. Run the producer.

Does this meet the goal?

You have an Azure HDInsight cluster.

You need a build a solution to ingest real-time streaming data into a nonrelational distributed database.

What should you use to build the solution?

DRAG DROP -

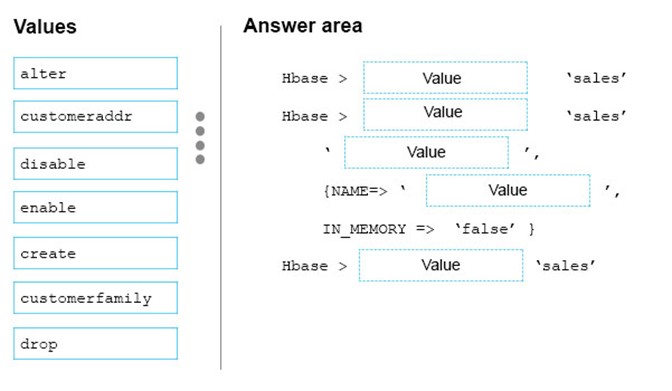

You have an Apache HBase cluster in Azure HDInsight. The cluster has a table named sales that contains a column family named customerfamily.

You need to add a new column family named customeraddr to the sales table.

How should you complete the command? To answer, drag the appropriate values to the correct targets. Each value may be used once, more than once, or not at all. You may need to drag the split bar between panes or scroll to view content.

NOTE: Each correct selection is worth one point.

Select and Place:

You have an Apache Hive table that contains one billion rows.

You plan to use queries that will filter the data by using the WHERE clause. The values of the columns will be known only while the data loads into a Hive table.

You need to decrease the query runtime.

What should you configure?

You plan to copy data from Azure Blob storage to an Azure SQL database by using Azure Data Factory.

Which file formats can you use?

You have an Apache Spark cluster in Azure HDInsight.

You plan to join a large table and a lookup table.

You need to minimize data transfers during the join operation.

What should you do?